Your Best AI Work Should Be Reusable

How useful AI work gets shared

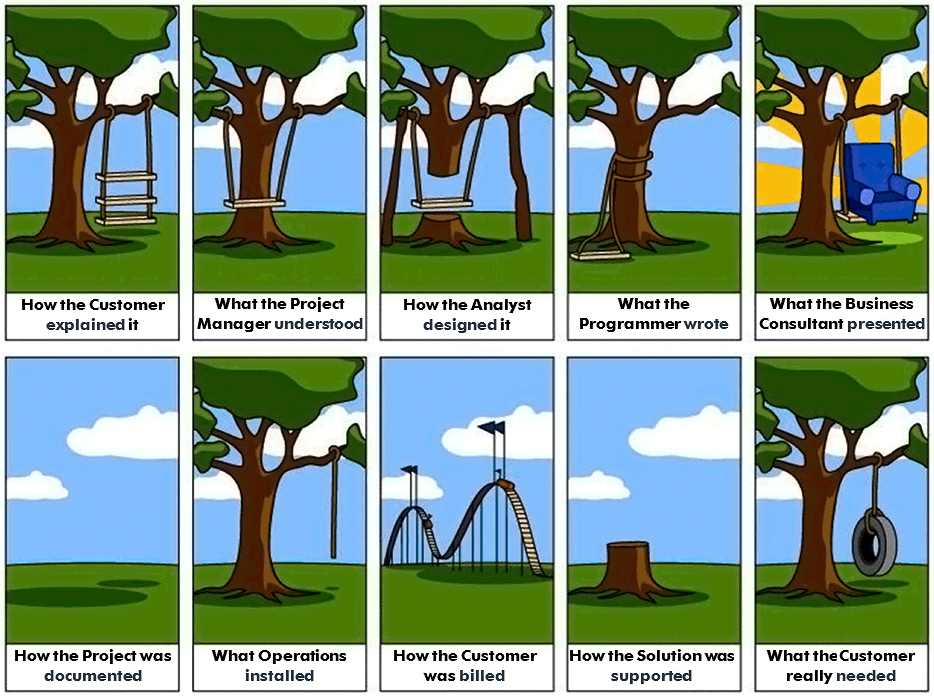

Most company context still gets transmitted like a game of telephone.

“AI-native” is one of those phrases that everyone wants to use and almost nobody defines.

Usually it means something like: everyone has ChatGPT, engineering uses Cursor, there are a few internal prototypes floating around, and somebody is wiring up some MCP servers. That’s all useful. It can make people faster, and it can make a team feel like it’s moving in the right direction.

But I don’t think that’s enough.

For a company to be truly AI-native, important context stops living as tribal knowledge scattered across Slack threads, docs, and the heads of a few tenured employees, and starts living in systems that both humans and agents can use to do real work.

That means the company gets better when context is captured once and reused many times. When someone figures out how to query the right metric, investigate an incident, or work around some ugly internal API, that knowledge should not stay trapped with them. Over time, the company becomes easier to operate because the context is easier to share, execute, and improve.

That’s the part of Statespace I find most interesting.

Build the app locally

Most useful Statespace apps should start locally.

You run statespace init, point your coding agent at the app, and iterate quickly. You talk to it, teach it the domain, refine the tools, tighten the guardrails, and shape the behavior until it does something genuinely useful. That local loop matters a lot. It’s the fastest way to turn half-formed operational knowledge into something concrete.

Statespace should make that loop easy. Your coding agent can generate the Markdown and regexes and keep evolving the app with you instead of forcing you to author every file by hand.

But this is just the first phase. A good local workflow is the seed.

Deploy it when it’s worth sharing

Things get more interesting once the app is useful enough to publish.

Once you deploy a Statespace app, it becomes a shareable interface with a URL. A product manager’s agent can use it, another engineer’s coding agent can use it, and it can live in docs, workflows, or anywhere else in your stack that needs a bounded, domain-aware interface.

The goal is to replace “ask the one engineer who knows how this works” with “just use this app.”

Each app can encapsulate one logical unit of company context: how to query a messy revenue database, how to investigate an incident, or how to wrap some finicky internal tooling with the right caveats. Teams can publish those capabilities, reuse them, and keep improving them over time.

Once these apps are deployed, you also get the operational pieces companies actually care about: access control, traces, logs, auditability, a dashboard per app, and a place to keep improving the system over time.

What AI-native should mean

This is why I keep coming back to the phrase “AI-native,” even though it’s a bit overused.

An AI-native company is one where useful context compounds instead of disappearing into chat history, where the best workflow does not stay trapped on one engineer’s laptop, and where humans and agents can plug into the same hard-won operational knowledge instead of rediscovering it from scratch every time.

That is where Statespace apps start to feel like real infrastructure.

We first saw this clearly in databases, because they make the problem obvious: the schema alone is never enough, and the real business meaning lives in context. But the same pattern shows up anywhere agents need more than raw access.

Statespace is our bet that AI-native companies will be built out of many small, explicit, domain-owned systems that teams can publish, share, and improve together.